The Agents Are Addictive

In the most recent episode of the State of Agentic Coding podcast, Armin Ronacher made an interesting comment that caught my ear. “The experience that you get from using an agent is basically being on drugs”, I have experienced this feeling. Anyone who uses coding agents probably has. It’s not something I would ascribe to drug use. (I wouldn’t know anything about that anyway). But something I would place in the realms of slot machines and social media algorithms. But why would I place it there?

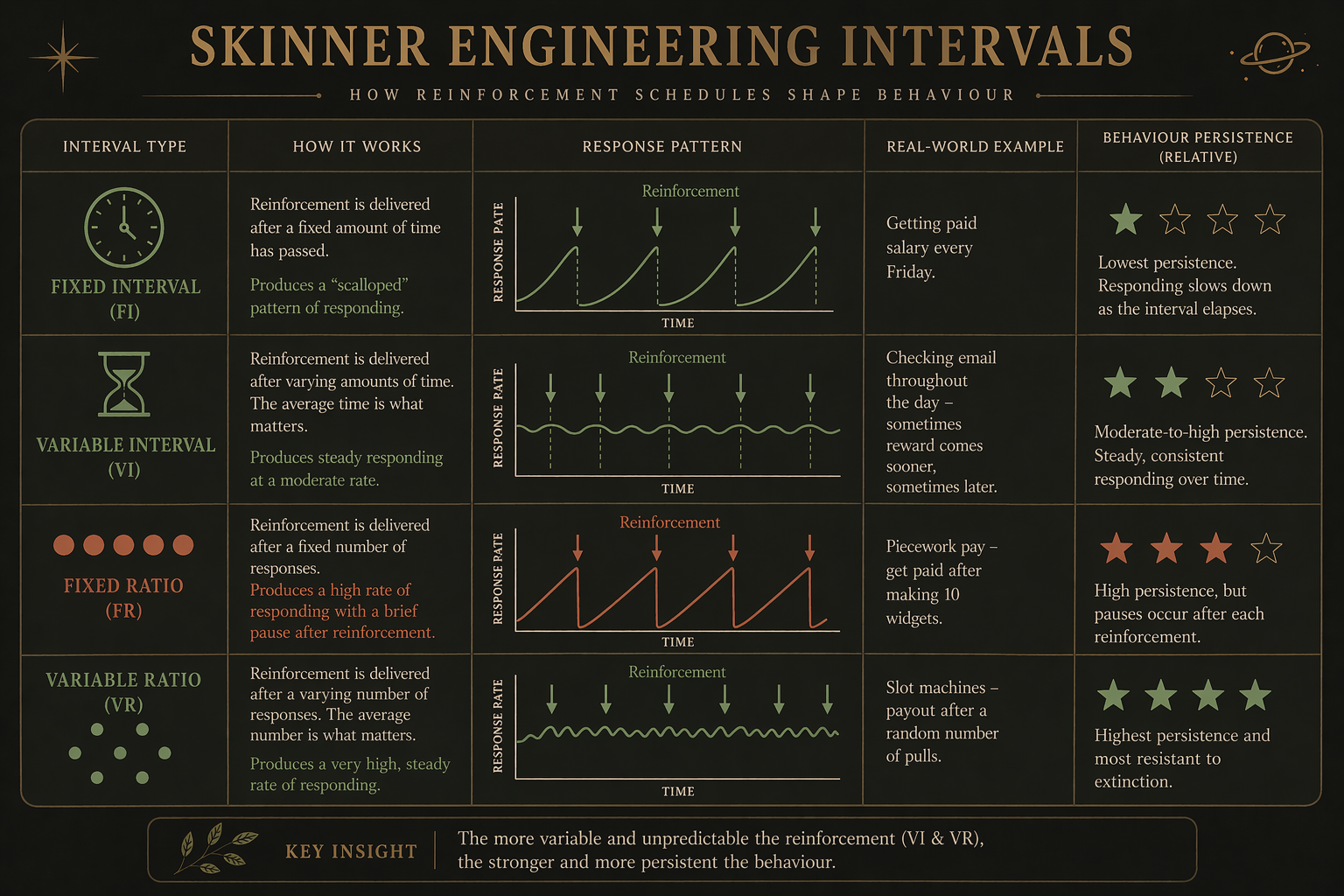

In short, it’s well studied how you can condition people to engage in certain behaviors, such as working, doomscrolling, or mindless gambling. Thanks to the work of B.F. Skinner, we know there are four main ways to condition behavior in subjects, whether it be animals or humans. All you need is a condition and a reward. Tinkering with the timing and ratio of reward delivery will determine the strength of the conditioning. Given that most people who program are probably not familiar with Skinner, they can’t accurately define what this pulling feeling is. To sit at the computer for hours on end, looking at something, going and going and going, on and on and on. Why do we feel drawn to do this? Even when we know it’s unlikely to be productive by spewing out all this work.

Fundamentally, coding agents operate at a hybrid level of reinforcement. It’s a fixed ratio at the planning and inner loop levels, but a variable ratio at the problem-solving level. Mirroring how humans tend to work within structured workflows or to-do lists versus exploratory debugging. However, when it comes to the human-in-the-loop getting this output back from the agent, it’s hard to know when the agent will do exactly what you expect, even with well-drawn task lists. Therefore, we have this uncertainty about when the work will be done. And this is what keeps dragging us to watch its every move. Will it debug correctly this time? Will the tests pass? Is the JSON structure being returned with all the details I asked for? Is it considering what I said 3 prompts ago?

This is not to simplify or ignore the more cognitive arguments that ensue from continued undistracted use of these sycophantic, speedy helpers. But it is one of the main reasons you likely feel a pull to keep prompting and speeding up development rather than slowing down and thinking through a problem. You’re not lazy; you’re stuck in the variable-reward loop that rewards motion rather than deliberate thought.